Welcome!

This page is devoted to the subject of "Sparse Bayesian Modelling". It is maintained by Mike Tipping as part of his personal website, and it is also redirected to by www.relevancevector.com.

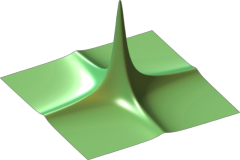

"Sparse Bayesian Modelling" describes the application of Bayesian "automatic relevance determination" (ARD) methodology to predictive models that are linear in their parameters. The motivation behind the approach is that one can infer a flexible, nonlinear, predictive model which is accurate and at the same time makes its predictions using only a small number of relevant basis functions which are automatically selected from a potentially large initial set.

The "relevance vector machine" (RVM) is a special case of this idea, applied to linear kernel models, and may be of interest due to similarity of form with the popular "support vector machine".

Below is a list of downloadable relevant papers, tutorial slides and a free software implementation (for Matlab®). Thanks for your continued interest!

Relevant Papers

An introductory paper on Bayesian inference in machine learning, focusing on sparse Bayesian models, is available:-

Tipping, M. E. (2004). Bayesian inference: An introduction to principles and practice in machine learning. In O. Bousquet, U. von Luxburg, and G. Rätsch (Eds.), Advanced Lectures on Machine Learning, pp. 41–62. Springer. [Abstract] [PDF] [gzipped PostScript]

A fairly comprehensive full-length journal paper on sparse Bayesian learning:

-

Tipping, M. E. (2001). Sparse Bayesian learning and the relevance vector machine. Journal of Machine Learning Research 1, 211–244. [Abstract] [Available from JMLR]

There are a couple of minor typos in the above paper.

Two early conference publications on the Relevance Vector Machine:

-

Tipping, M. E. (2000). The Relevance Vector Machine. In S. A. Solla, T. K. Leen, and K.-R. Müller (Eds.), Advances in Neural Information Processing Systems 12, pp. 652–658. MIT Press. [Abstract] [gzipped PostScript]

-

Bishop, C. M. and M. E. Tipping (2000). Variational relevance vector machines. In C. Boutilier and M. Goldszmidt (Eds.), Proceedings of the 16th Conference on Uncertainty in Artificial Intelligence, pp. 46–53. Morgan Kaufmann. [Abstract] [PDF] [gzipped PostScript]

Note that the "variational" relevance vector machine is pretty much identical to the non-variational version, but the parameter estimation procedure is a lot slower ...

Exploiting the sparse Bayes methodology to realise "sparse kernel PCA":

-

Tipping, M. E. (2001). Sparse kernel principal component analysis. In Advances in Neural Information Processing Systems 13. MIT Press. [Abstract] [gzipped PostScript]

Robust sparse Bayesian regression:

-

Faul, A. and M. E. Tipping (2001). A variational approach to robust regression. In G. Dorffner, H. Bischof, and K. Hornik (Eds.), Proceedings of ICANN'01, pp. 95–102. Springer. [Abstract] [gzipped PostScript]

Some theoretical analysis of marginal likelihood optimisation and sparsity:

-

Faul, A. C. and M. E. Tipping (2002). Analysis of sparse Bayesian learning. In T. G. Dietterich, S. Becker, and Z. Ghahramani (Eds.), Advances in Neural Information Processing Systems 14, pp. 383–389. MIT Press. [Abstract] [gzipped PostScript]

An accelerated learning algorithm:

-

Tipping, M. E. and A. C. Faul (2003). Fast marginal likelihood maximisation for sparse Bayesian models. In C. M. Bishop and B. J. Frey (Eds.), Proceedings of the Ninth International Workshop on Artificial Intelligence and Statistics, Key West, FL, Jan 3-6. [Abstract] [PDF] [gzipped PostScript]

This is the algorithm of choice for implementing a sparse Bayes model. Its an order of magnitude faster than the original, uses less memory and analytically (rather than numerically) "prunes" irrelevant basis functions. It is utilised by the SparseBayes V2 software described below.

Tutorial Slides

Copies of the slides from my 2003 lectures at the Tübingen "Machine Learning Summer School" are available in ".ps.gz" format:

- Introduction to Bayesian Inference [180 KB]

- Bayesian Inference: Marginalisation [147 KB]

- Sparse Bayesian Models and the "Relevance Vector Machine" [1.18 MB]

- Sparse Bayesian Models: Analysis, Optimisation and Applications [2.68 MB]

Software Implementations

My currently-favoured implementation of choice is the new "V2"

SparseBayes software release for Matlab® (March 2009). This

is a library of routines that implement the generic Sparse Bayesian

model, for regression and binary classification, with inference based

on the accelerated algorithm detailed in the paper "Fast marginal

likelihood maximisation for Sparse Bayesian models" (see above).

The "V2" library is freely available from my

downloads page. Note that

the SparseBayes

package for Matlab® is free software, distributed by Vector

Anomaly subject to the GNU Public Licence, version 2. Please see

the file "licence.txt" included with the distribution for details.

My currently-favoured implementation of choice is the new "V2"

SparseBayes software release for Matlab® (March 2009). This

is a library of routines that implement the generic Sparse Bayesian

model, for regression and binary classification, with inference based

on the accelerated algorithm detailed in the paper "Fast marginal

likelihood maximisation for Sparse Bayesian models" (see above).

The "V2" library is freely available from my

downloads page. Note that

the SparseBayes

package for Matlab® is free software, distributed by Vector

Anomaly subject to the GNU Public Licence, version 2. Please see

the file "licence.txt" included with the distribution for details.

Note also that this new "V2" software is intended to supercede the earlier "V1" version, but for "reference" purposes, a simpler, baseline, Matlab® software implementation is also available from the same downloads page. This "SparseBayes V1.1" code (which dates back to 2002) implements the "original" sparse Bayesian learning algorithm, and so is relatively slow. It is nevertheless retained for completeness.